Some Background

As cloud penetration has increased over the past ten years, we’ve seen a change in the dynamic between successful software public software companies and emerging startups. Rather than replacing companies born a generation earlier with cloud-native solutions, new startups are building on top of the foundations they’ve created.

With that in mind, I’m starting this series to explore the dynamic between leading public software companies and the early-stage startups they are enabling. Each post will focus on one company and drill down on how it changed its market, what others can learn from its success, and most importantly, how younger companies are building in its wake.1 Snowflake’s recent acquisition of Streamlit (where GGV was an early investor) has shed light on the growing ecosystem around the data warehouse giant, making it an interesting time to look back at the company’s past and think about what opportunities it has created.

Snowflake’s Rise

Snowflake’s origin story exemplifies a theme that has powered SaaS over the last decade – moving to the cloud radically alters markets. The data warehouse was not a new idea when Snowflake (and its big cloud counterparts, Redshift and BigQuery) hit the market, but its cost model and operational overhead made it inaccessible or impractical to a large swath of customers. Standing up an on-prem database has huge upfront costs, and bundling storage and compute meant that as analytics scaled within a company, costs continued to skyrocket. That’s before customers even considered the ongoing headcount burden of supporting a large on-prem system.

In that context, Snowflake was able to achieve unprecedented growth by aligning a burning market need with an innovative cost model, powered by differentiated technology. In addition to bringing a level of speed and usability that incumbents couldn’t match, Snowflake was able to separate the storage and compute layers of the warehouse, and with underlying hardware getting cheaper at both layers, dramatically lower the cost to store vast quantities of data in the cloud while also removing the operational burden of maintenance. This could not have come at a better time. By the early to mid-2010s, “big data” had become a top priority for businesses of all kinds2, and Snowflake’s usage-based pricing made adopting a warehouse a no-brainer, low-risk decision. More importantly, beyond initial adoption, cheap storage and pay-as-you-go compute allowed customers to move from a default of storing only the data they absolutely need to a default of storing almost everything. Just look at Snowflake’s NDR, which last quarter was a ridiculous 178% at >$1B ARR scale.

The Snowflake story represents an amped up version of the traditional on-prem to SaaS market transition that fueled the rise of companies like ServiceNow and Workday. The team leveraged the granularity, ephemerality, and zero upfront cost features of a robust cloud ecosystem to not just move customers from perpetual to subscription to consumption, but to alter the pricing model of the warehouse in a way that impacts how companies think about storing and utilizing data. The results have been staggering.

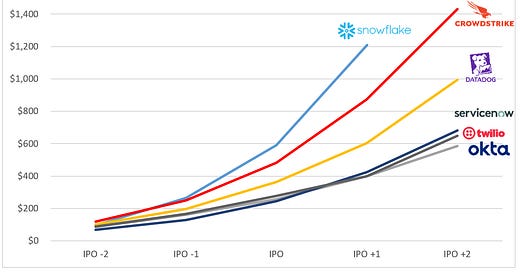

Annual Revenue Pre and Post IPO for Top Infrastructure Companies:

Snowflake Today

The critical component of the Snowflake story that I see powering the next generation of data startups is the mindset shift it enabled in customers; with cloud economics, enterprises can default to throwing any data that may be relevant in the future into a warehouse (or many cases, a data lake, but that’s a separate discussion). The removal of this bottleneck should, at least in theory, change everything. Companies can now query their data with ease – they should finally be able to realize all those benefits of becoming “data driven” they’ve been promised! However as with any system, when one bottleneck is removed, another emerges, and while companies can now store and analyze data at massive scale, actually deriving value from that data remains a challenge. That’s another way of saying that many companies have adopted a data warehouse, but don’t yet have the tools to utilize it to its full potential.

As you would expect, Snowflake itself still wants as much data in its product as possible, which further exacerbates the problem. Their best path to maximum long-term data volumes, as the rebrand to the “Data Cloud” suggests, is through use case expansion. Conceptually, the Data Cloud allows enterprises to use the same SQL-compliant database for workloads beyond analytics. Customers can now unify data science, data engineering, and data sharing (traditionally done on data lakes) on their warehouse, and most importantly, Snowflake can act as the back-end for off-the-shelf and custom “data apps” as varied as SIEMs and CRMs. The urgency of this expansion makes sense when you consider the data gravity benefits the company can enjoy. If enterprises unify their data siloes on Snowflake, they will likely never leave. One layer deeper, if a specific workflow or data app reads from and writes to Snowflake, it will likely stay Snowflake-centric forever (look at Salesforce’s market position despite horrible UX). So the company has an opportunity to take a huge bite out of the enterprise software market by pushing standardization and use case expansion and has every incentive to gain market share before competitors can.3

Opportunities in the Snowflake Ecosystem

Though daunting at first, a world in which enterprises are more consolidated on Snowflake creates more, not less opportunities for startups. I break down these opportunities into three categories, listed below in the order I’d expect them to mature.

Unbundling Data Engineering

There’s a reason the biggest tech companies in the world have Data Platform teams hundreds-strong – managing data at scale is very hard and very resource intensive. And if enterprises are relying on their data infrastructure to impact day-to-day business operations, the cost of failure is high. In the past, data management largely consisted of highly-skilled (and highly-paid) data engineers chipping away at problems like integration, modeling, discoverability, governance, and reliability in the short time they had between putting out fires for downstream data consumers. We’re already seeing those sub-tasks get unbundled and productized by new startups. Fivetran led the way with off the shelf integration, and newer startups like dbt Labs and Monte Carlo* have followed in modeling and reliability.

These companies are hitting the market in stride. Enterprises that have committed to becoming data-driven are spending millions on Snowflake, seeing the challenges of data management up-close, and eager to give their data engineers leverage through off-the-shelf tools. Much in the way observability and cloud security have become “must-have” attaches to cloud infrastructure, I expect a subset of these new data tools to become critical compliments to Snowflake. That is not to say these companies are destined to be small features – with Snowflake, BigQuery, and Redshift doing a combined >$5B in revenue in 2021, which we can expect to grow to >$10B within a few years; there’s plenty of revenue to go after already. Moreover, companies like Monte Carlo*, which deals primarily with metadata, or dbt Labs, which builds a data model, play at a layer of abstraction above the underlying data, which gives them an opportunity to build broader platforms that treat the warehouse as a (sophisticated) primitive.

Broadening Data Consumption

Once companies have ingested their data, stored it in the cloud, and ensured it’s reliable, secure, properly modeled, and discoverable, they still need to do the hard part – making that data useful for their business. Despite the promises of “Big Data,” for most, this amounts to simple BI dashboards. As more companies become truly data-driven, defined as using data to guide and at times autonomously make decisions, I expect data consumption to expand across two vectors.

Extending Analytics: Traditional analytics is moving away from SQL-dependency with low-code tools like Sigma and Metabase, while more sophisticated companies are moving their analytics to real-time and exposing dashboards to end users with products like Materialize and StarTree.

Democratizing ML: ML works for the most advanced technical orgs and pretty much no one else. Products like Rasgo help companies tackle major infrastructure challenges, like feature engineering, on top of their existing Snowflake setup, while others like Pecan* allow analysts to manage the entire model building process without code, limiting the need for specialized talent.

Snowflake as an Operational Back-End

In the long-term, Snowflake has an opportunity to move beyond analytics and even ML and fundamentally change the way enterprise software is built. Historically, software has bundled the application with the database, meaning a product like Salesforce collects the data you input through its webapp and stores it in a proprietary database. This has made software remarkably sticky, often to the detriment of the user who needs to tolerate poor UX and increasing prices. Snowflake is offering an alternative architecture; if customers already are moving their data from apps to Snowflake, why not use Snowflake as the database itself for analytical applications? This way they can control and manage one central database, giving enterprises flexibility to swap app vendors, and app developers increased context through access to data beyond what they have generated.

Architecture Shift (credit Jon Ying via Aaref Hilaly)4:

We’re already beginning to see this play out. Reverse ETL tools like Hightouch and Census are helping companies sync data from their warehouse back into apps to inform and even autonomously make decisions. These tools have an opportunity to expand and become a more robust middleware layer between Snowflake and operational apps. Other startups are building natively on Snowflake from day one. Hunters and Panther are helping security teams move away from Splunk and build their SIEM directly on Snowflake5, and a crop of new PLG sales tools like Calixa and HeadsUp are leveraging Snowflake to generate a more fulsome view of the customer.

The long-term implications of this shift are massive. For enterprises, separating the application and the data layer gives them control over their data, and critically, lowers switching costs, enabling them to try new products and pressure vendors to innovate in order to keep their business. As low and no-code tools mature, Snowflake also gives them a unified place on which to build apps. For software developers, building on Snowflake can enable faster growth. By offloading data storage, they can ship faster by focusing on the application logic and deliver value from the get-go by ingesting a customer’s existing data. Particularly if a product is storage heavy, by billing for storage through Snowflake, startups can price more aggressively than incumbents and remove friction in their GTM motion.

This new architectural model, while promising, is not without its challenges. When apps hand over the data storage responsibility to Snowflake, they also force the customer to manage the data model more actively, especially if they begin to join data from disparate apps. This extends to security, as a customer will likely not want every app to access every row of data in their warehouse, requiring more granular authorization and access control. Once apps begin to push Snowflake’s performance limits, we will likely see a need for real-time middleware and distributed caching on top of the warehouse. As the Snowflake app ecosystem expands, I expect the company itself to address some of these issues, new startups to address others, and many apps to continue to bundle a database for avoid these challenges.

The Streamlit Acquisition

While much of the vision I’ve described may seem aspirational, we’re seeing it begin to materialize in Snowflake’s biggest strategic move yet – an $800M acquisition of Streamlit*, the company behind a popular open source project for building data apps. I believe this single acquisition strengthens all the ecosystem opportunities I’ve detailed.

Unbundling Data Engineering: Streamlit’s mission is to make it easier to build and share data apps. It allows data scientists to build and deploy models with beautiful front-ends without an army of data engineers or front-end developers.

Broadening Data Consumption: In doing so, it also makes data more consumable across the enterprise, since business users can now interact with visual apps, rather than looking at model outputs. The Streamlit team treated viewers as critical personas from day one, focusing on making interacting with apps easy.

Snowflake as an Operational Back-End: Finally, as Benn Stancil summarized well in a recent blog post, Streamlit is a new way of interacting with the database that could form the foundation for a more robust middleware layer between Snowflake and developers looking to build fully-featured apps on top of it. We’ll need to wait and see if this materializes, but Benn outlines a compelling case for Snowflake itself to step in an make a decoupled architecture a reality.

A New Software Transition

The story in software over the past decade has been the move from perpetual licenses to SaaS, and more recently the move within SaaS to usage-based pricing. These transitions have brought benefits to the end customer in the form of more continuous improvement from vendors and a better alignment of pricing and ROI. An ecosystem of data apps on top of Snowflake could represent a new transition. The warehouse as a system of record across apps is great for customers in that it reduces switching costs, thereby putting pressure on incumbers to innovate. But it’s also great for founders in that it opens up previously “won” markets to new competition – if the “system-of-record” moat erodes, giants like Salesforce could suddenly look vulnerable.

This is not to say that all software will become Snowflake-centric – Databricks should see a similar ecosystem emerge around it as it pursues its Lakehouse vision, and projects like Apache Iceberg offer a vision for unified data architecture without vendor lock-in. But the speed at which it has captured the market is allowing Snowflake to set the tone, creating a world of opportunity for ambitious founders.

I’m going to assume basic knowledge of what these companies do and link to good explainers where possible. Technically is a good go-to resource.

Per one survey, the number of enterprises with CDOs rose from 12% in 2012 to 65% in 2021,